Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Learn why AI agents need guardrails and logic-first architecture to prevent hallucinations, control behavior, and build trustworthy autonomous AI systems.

Artificial intelligence agents are becoming more capable every month.

They can search the web, analyze data, write content, interact with APIs, and automate workflows.

But capability alone does not create reliability.

Without proper constraints, AI agents can behave unpredictably. They may hallucinate information, misuse tools, or drift away from the original goal.

This is why designing AI systems requires more than prompts and powerful models.

It requires guardrails.

Guardrails and logic-first architecture are what transform experimental AI agents into systems that businesses can trust.

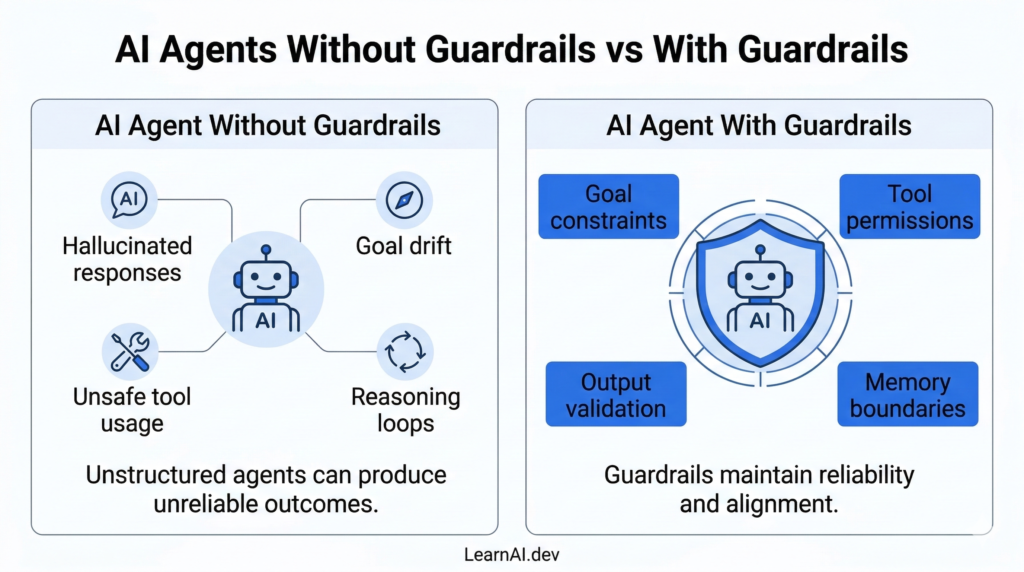

AI agents are designed to pursue goals. However, when those goals lack structure, several problems arise.

Large language models sometimes generate information that sounds correct but is factually wrong. When an agent acts on this information, the entire workflow can break.

Agents may slowly move away from the original objective, especially in long reasoning chains.

If an agent has access to tools such as APIs or databases, incorrect reasoning can lead to unintended actions.

Agents that repeatedly attempt the same step without progress can consume resources and stall systems.

These issues are not caused by bad models. They occur when systems are built without proper control mechanisms.

Guardrails solve this problem.

Guardrails are rules, constraints, and validation mechanisms that guide an AI agent’s behavior.

They limit what the agent can do, how it reasons, and how its outputs are evaluated.

Instead of allowing an agent to operate without boundaries, guardrails ensure the system stays aligned with the intended goal.

Common guardrails include:

Role constraints that define what each agent is allowed to do.

Tool restrictions that control which external systems an agent can access.

Output validation layers that check results before they are accepted.

Goal verification steps that confirm the agent is still solving the correct problem.

Guardrails do not diminish an AI system’s capabilities.

They increase reliability.

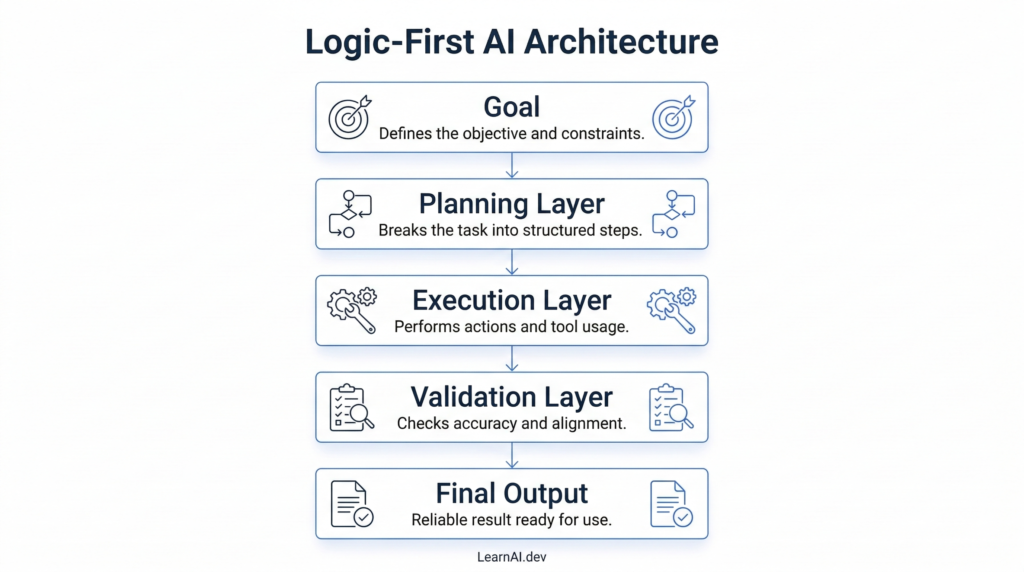

One of the most effective ways to design reliable AI agents is through logic-first architecture.

In this approach, the workflow is defined before the agent begins reasoning.

Instead of asking an AI system to solve everything at once, the process is broken into clear stages.

A common structure looks like this:

Goal ->Plan ->Execute -> Validate

The agent first understands the goal.

Then it creates a plan of action.

Next, it performs the required tasks.

Finally, the output is validated before being accepted.

This structure ensures that reasoning remains organized and predictable.

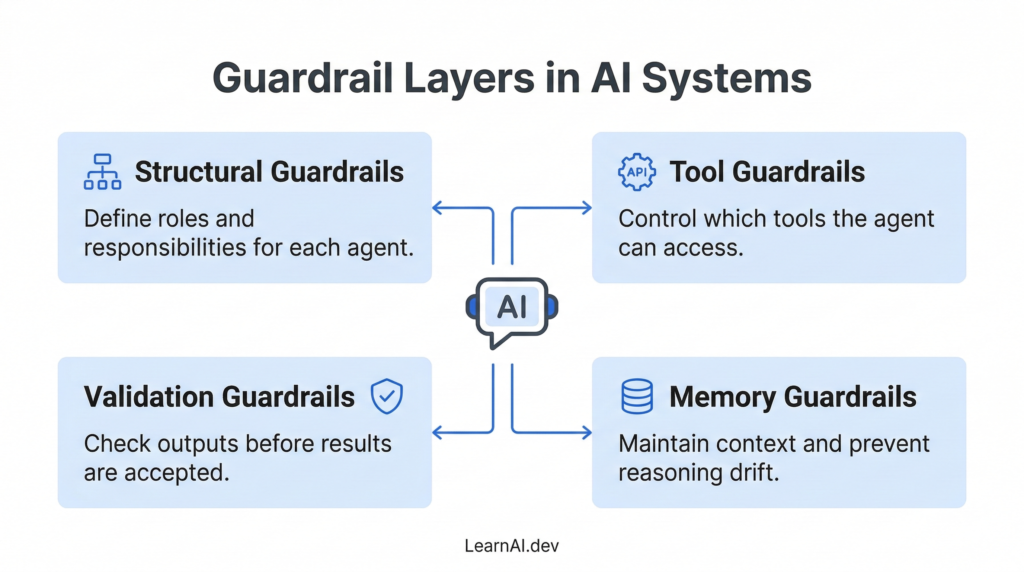

Guardrails can exist at several layers of the architecture.

Each layer helps control a different part of the system.

Structural guardrails define the roles within the system.

For example:

A research agent collects data.

A writer agent produces output.

A reviewer agent checks accuracy.

Each agent performs a specific task rather than attempting to solve the entire workflow.

Agents often interact with external systems such as APIs, search engines, or databases.

Tool guardrails define:

Which tools can an agent use

When the tool can be used

What type of input is allowed

This prevents misuse of sensitive or critical systems.

Validation guardrails ensure that the output meets defined standards.

This may include:

Fact checking

Data consistency checks

Goal alignment verification

Validation layers often appear as reviewer agents in multi-agent systems.

Agents rely on memory to track context and previous actions.

Without control, memory can grow too large or become inconsistent.

Memory guardrails help maintain:

Relevant context

Limited history size

Clear separation between tasks

This reduces context drift and improves reasoning accuracy.

Consider an AI system designed to generate competitive intelligence reports.

Without guardrails, a single agent might attempt to:

Search for competitor information

Analyze pricing data

Write the report

Validate the results

If any part of the reasoning fails, the final report may contain inaccurate conclusions.

With guardrails, the system becomes structured.

A planner agent defines the research scope.

A research agent collects data.

An analysis agent identifies trends.

A reviewer agent validates the findings.

Each layer adds reliability and prevents incorrect reasoning from spreading through the workflow.

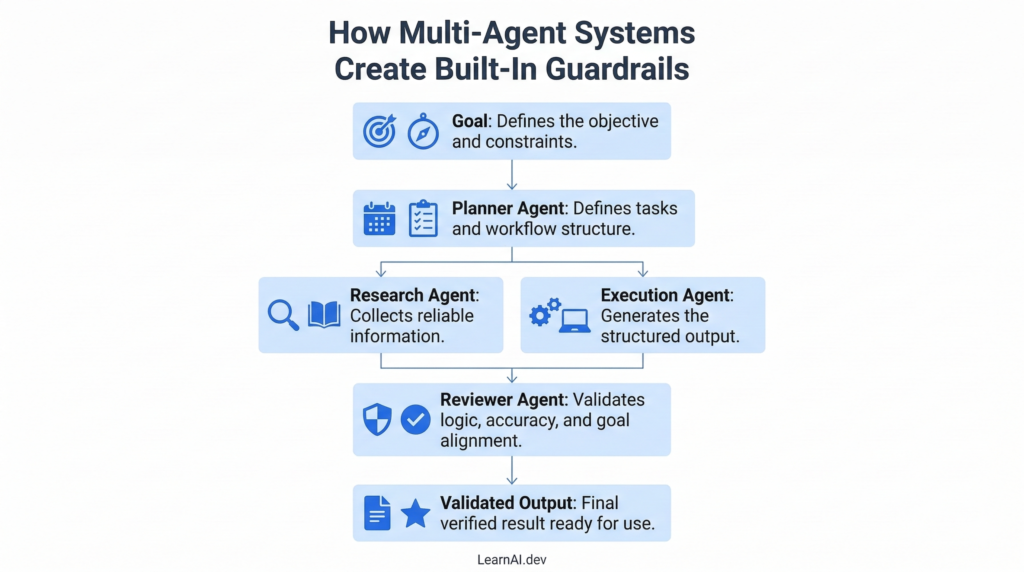

Multi-agent systems naturally introduce guardrails through specialization.

Different agents handle different responsibilities.

For example:

Planner agents define tasks.

Research agents collect information.

Execution agents perform actions.

Reviewer agents validate results.

This layered approach creates natural checkpoints within the system.

Instead of trusting a single reasoning chain, the system distributes responsibility across multiple agents.

This reduces error propagation and improves consistency.

Businesses cannot deploy systems that behave unpredictably.

Automation must be reliable, repeatable, and aligned with defined goals.

Guardrails enable this reliability by ensuring that agents operate within structured boundaries.

When properly designed, AI systems become more than experimental tools.

They become dependable components of business operations.

Trust is built through architecture, not just intelligence.

As AI agents become more autonomous, the importance of guardrails will continue to grow.

Organizations will increasingly focus on designing systems that combine intelligence with control.

The most successful AI implementations will not be the ones with the most freedom.

They will be the ones with the most thoughtful structure.

AI agents are powerful.

But power without structure leads to unpredictable behavior.

Guardrails provide the rules and validation layers that guide agent behavior and maintain alignment with business goals.

Logic-first architecture ensures that AI systems follow structured workflows rather than uncontrolled reasoning.

Together, these principles transform experimental agents into trustworthy systems.

And trust is what ultimately determines whether AI becomes a tool or a dependable part of the modern workforce.

Subscribe to get the latest posts sent to your email.